ECIM: World-first Floating-point Computing-in-memory Architecture

본문

Overview

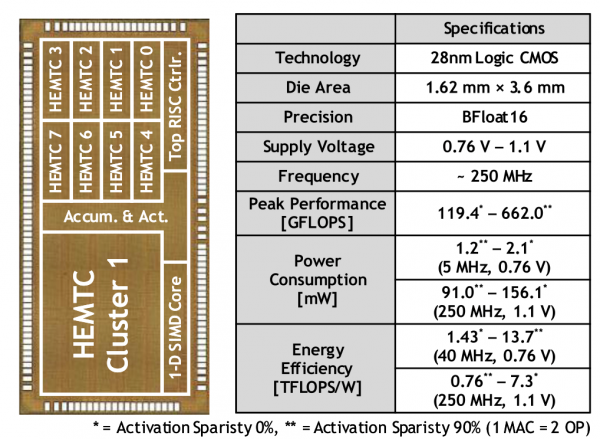

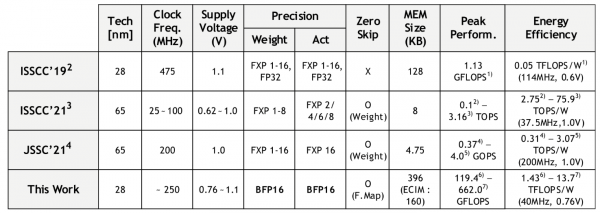

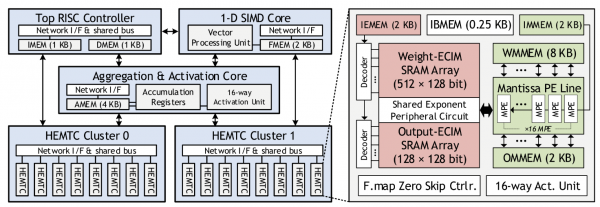

The authors propose a heterogeneous floating-point (FP) computing architecture to maximize energy efficiency by seperately optimize exponent processing and mantissa processing. The proposed exponent-computing-in-memory (ECIM) architecture and mantissa-free-exponent-computing (MFEC) algorithm reduce the power consumption of both memory and FP MAC while resolving previous FP computing-in-memory processors' limitations. Also, a bfloat16 DNN training processor with proposed features and sparsity exploitation support is implemented and fabricated in 28 nm CMOS technology. It achieves 13.7 TFLOPS/W energy efficiency while supporting FP operations with CIM architecture.

Features

- Heterogeneous floating-point computing architecture

- Mantissa free exponent computation

- Exponent computing-in-memory w/ hierarchical bit-line

Related Papers

- S.VLSI 2021

- HOTCHIPS 2021